AI garbage dumped into the open-source community! Some projects have started suspending external contributions, and Shitcode feels like a DDoS attack.

Open-source project Tldraw announces suspension of accepting external contributions due to overwhelming AI-generated junk code. Such “AI Slop” severely drains maintainers’ energy, and the community is exploring the establishment of reputation systems or escrow mechanisms to address this issue.

Overwhelmed by AI-generated content, open-source project Tldraw suspends external PRs

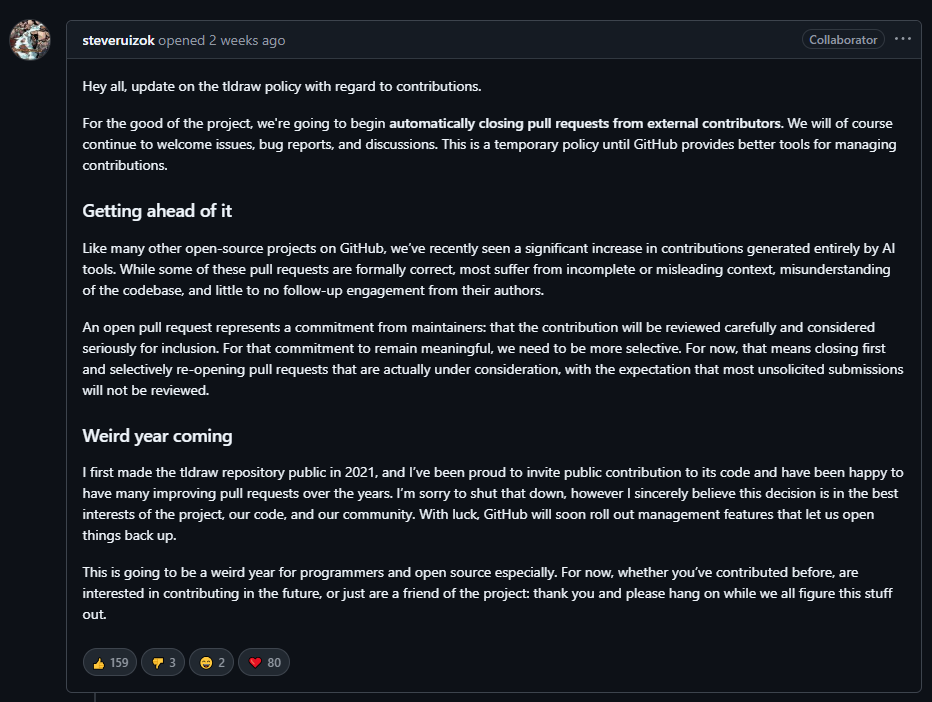

A popular open-source web drawing project on GitHub with over 40,000 stars, Tldraw, recently announced that it will suspend accepting pull requests (PRs) from external contributors.

Developer steveruizok pointed out that, like many open-source projects on GitHub, the team has recently observed a significant increase in contributions generated entirely by AI tools. Although some submissions are correct in form, most lack complete context, misunderstand the codebase, and the submitters rarely participate in discussions afterward.

steveruizok emphasized that an open PR represents a commitment from maintainers, meaning the contribution will be carefully reviewed and seriously considered for inclusion. To keep this promise meaningful, the team must implement stricter filtering.

Currently, Tldraw’s policy is to close all external PRs first, only reopening those that are genuinely considered. This is in the best interest of the project and code quality. Although it’s regrettable to close public contributions, steveruizok stated that they will only consider reopening once GitHub introduces better management tools.

Curl and OCaml also affected, AI Slop rampant

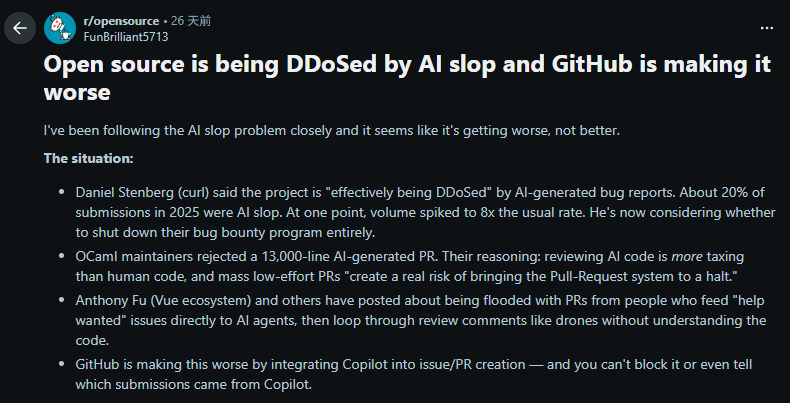

The decision to suspend pull requests at Tldraw is just one recent example of AI Slop (AI garbage) impacting the open-source community. Similar discussions have been heating up on Reddit and Hacker News forums.

A Reddit discussion pointed out that, Daniel Stenberg, maintainer of the well-known transfer tool Curl, revealed that the project is suffering from AI-generated erroneous reports being used in a “DDoS attack.” Approximately 20% of submissions in 2025 are AI garbage content, severely consuming volunteer maintainers’ time.

Additionally, OCaml maintainers have rejected a 13,000-line pull request generated by AI, citing that reviewing AI-produced code is more laborious than manually written code, and a flood of low-quality PRs could lead to system crashes.

Discussions on Hacker News focus on issues with GitHub’s mechanisms.

Some users believe that making PR counts prominent on the page encourages a culture of submitting for the sake of data.

The current dilemma with generative AI in open-source communities stems from many inexperienced developers using AI tools to generate worthless code, expecting maintainers to merge quickly. This behavior is damaging the collaborative trust within open-source communities.

Possible solutions: whitelist or reputation systems

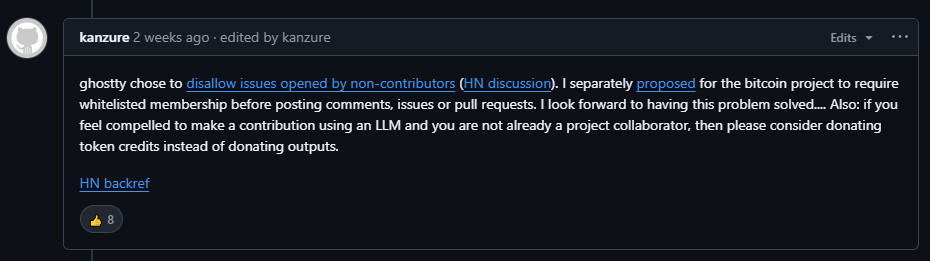

In response to the harassment of garbage code (Shitcode) in open-source communities, Bitcoin developer Bryan Bishop (kanzure) responded on Tldraw’s GitHub page that he had proposed to the Bitcoin Core development team to “privatize” the development process, shifting to a model limited to invited members or requiring whitelist inclusion to post comments and PRs.

Although this might violate the spirit of Bitcoin’s openness, Bryan Bishop believes that it can effectively reduce noise and useless debates caused by non-contributors, allowing developers to focus on the technical aspects and prevent valuable attention from being diverted by malicious or invalid interactions.

Besides privatization, software engineer Steve Rodrigue also suggests establishing cross-project contributor reputation systems, verifying account value through trust networks.

Another developer is working on a blockchain-based “Stake-to-PR” protocol, requiring submitters to pay a small deposit, which is forfeited if the content is deemed AI garbage, and fully refunded if it is a valid contribution. The goal is to raise the barrier to curb AI abuse.

Further reading:

AI content flood! Web3 Dictionary names “Slop” as the 2025 Word of the Year, sparking heated discussions in the tech community

Related Articles

GoPlus Scans ClawHub Ecosystem Top 100 Skills, 21% Exhibit Clear High-Risk Operations

China Rushes to Deploy MicroLobster OpenClaw, Officials Warn It Could Cause "Industrial Production Line to Go Out of Control"

Beware! Bonk.fun Official Website Hijacked by Hackers, Users' Cryptocurrency at Risk of Being Stolen by "Clicking Here"

China's National Industrial Information Security Development Research Center Issues Risk Warning on OpenClaw Applications in Industrial Sector

Meta Unveils AI Tools for Facebook and WhatsApp to Deal With Crypto Scams