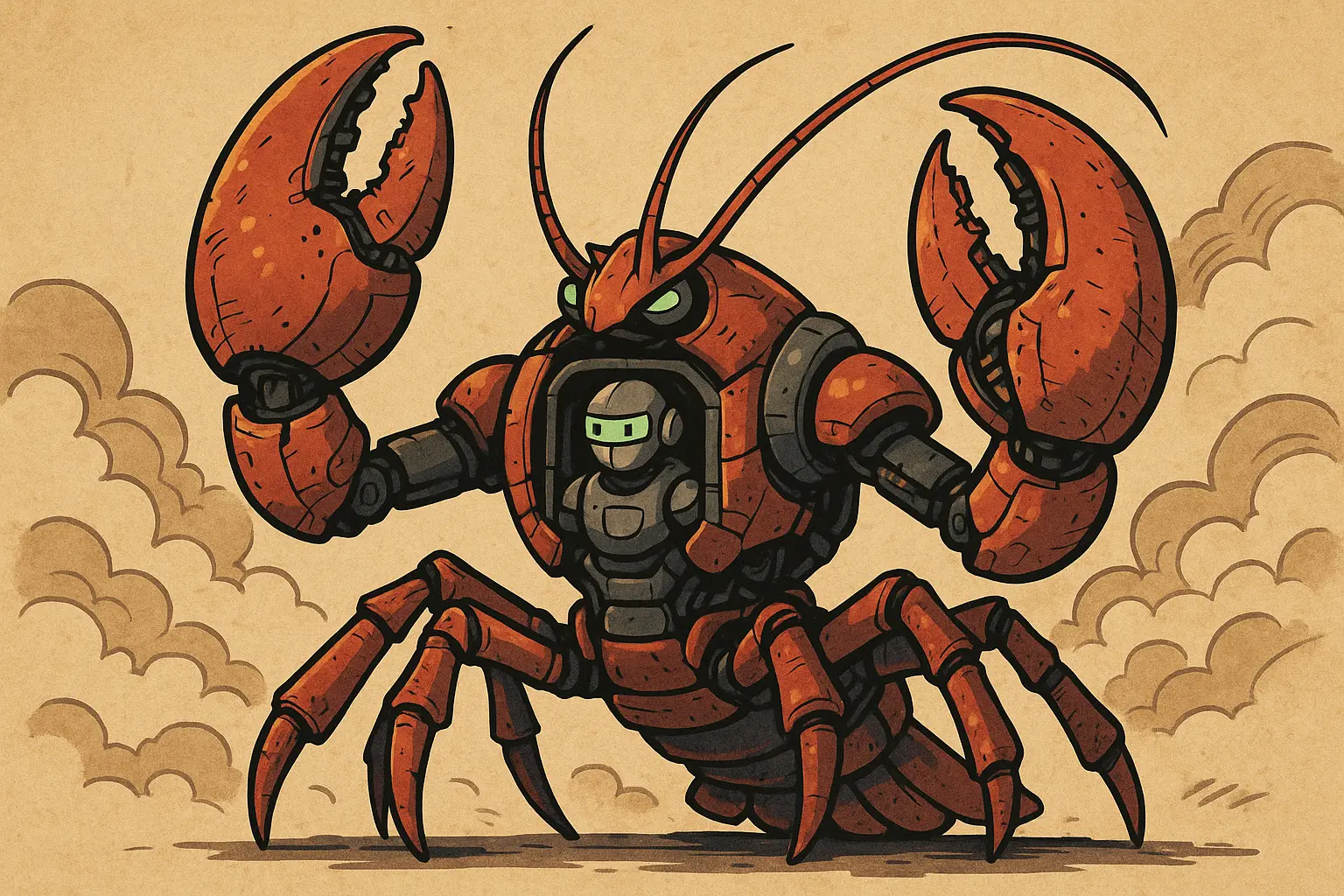

OpenClaw High-Privilege Access Raises Security Concerns as China Releases Secure Usage Practice Guide

The China National Cybersecurity Emergency Response Center (CNCERT) and the China Cybersecurity Society jointly released the “OpenClaw Security Best Practices Guide” on March 22, targeting four main groups: general users, enterprise users, cloud service providers, and technical developers. The guide offers layered security protection recommendations. Its release directly responds to the surge of OpenClaw (commonly known as “Lobster”) across China, which is assigned extremely high system privileges during operation.

Why OpenClaw’s High System Privileges Alarm Regulators

As an open-source AI agent tool, OpenClaw’s core design enables AI systems to autonomously perform multi-step tasks, including manipulating local files, initiating network requests, and coordinating across applications. This architecture requires it to obtain far greater system permissions during runtime than traditional software, meaning that if exploited maliciously or if security vulnerabilities are present, attackers could gain full control over user devices, steal sensitive data, or even lateral movement into enterprise internal networks.

The release of this official guide marks China’s authorities establishing a formal security baseline framework for AI agent tools while promoting AI commercialization.

Four Key Security Recommendations for General Users

CNCERT’s Core Security Advice for Ordinary Users

Environment Isolation Installation: Use dedicated devices, virtual machines, or containers to install OpenClaw, ensuring environment separation. Do not install on daily office computers.

Restrict Runtime Permissions: Do not run OpenClaw with administrator or superuser (root) privileges to prevent potential malicious operations from gaining maximum system control.

Prohibit Handling of Privacy Data: Do not store or process personal privacy information within the OpenClaw environment to avoid sensitive data leaks during AI agent task execution.

Timely Version Updates: Keep OpenClaw updated to the latest version to ensure known security vulnerabilities are patched.

Strengthened Requirements for Enterprise Users and Cloud Service Providers

Compared to ordinary users, the official guide imposes stricter institutional requirements on enterprise users. Enterprises must establish internal usage policies and approval processes for OpenClaw. When introducing new AI agent applications or high-privilege features, they must undergo security assessments and obtain management approval before deployment.

On the technical protection front, enterprises should enable host intrusion detection systems on servers running OpenClaw and generate tamper-proof audit logs for critical operations and security-related events to ensure traceability. Additionally, regular personnel security training and emergency drills should be conducted to enhance overall organizational response capabilities.

For cloud service providers, the guide requires comprehensive evaluation and reinforcement of the underlying security of cloud hosts, deployment of robust security defenses, and a focus on strengthening supply chain and data security protections to prevent security risks introduced through third-party dependencies.

Frequently Asked Questions

Q: What is the core reason CNCERT issued this guide?

OpenClaw requires high-level system access during operation, capable of manipulating files, networks, and cross-application functions, which makes potential security vulnerabilities far more serious than those in traditional software. As OpenClaw rapidly spreads across China, regulators prioritize preventing user data leaks and system security risks.

Q: Why should ordinary users avoid installing OpenClaw on daily office computers?

Office computers typically connect to enterprise intranets and store sensitive documents. Installing OpenClaw without environment isolation could lead to security issues if the tool has vulnerabilities, risking exposure of work data, enterprise credentials, and personal privacy. Using dedicated devices or virtual machines can effectively limit potential damage.

Q: Does the release of this security guide mean China will fully restrict OpenClaw usage?

Currently, the guide is a set of security recommendations rather than a mandatory ban. Its purpose is to provide a protective framework rather than impose restrictions. China’s official policy continues to promote AI commercialization through local government subsidies while also requiring enterprises and users to follow security standards, maintaining a dual-track approach.